“We’ve signed a deal to tap all of the compute capacity at SpaceX’s Colossus 1 data center.”

That single sentence, dropped by Anthropic on Wednesday, reshapes how investors should think about both companies heading into one of the most anticipated IPOs of the decade. The Claude maker is locking in access to more than 220,000 NVIDIA GPUs within the month, and the timing lands SpaceX a marquee AI customer just as it prepares to pitch itself to public markets at a valuation that could hit $2 trillion.

The deal is immediately material for Claude subscribers. Anthropic raised Claude Opus API rate limits and doubled Claude Code’s five-hour caps for Pro, Max, Team, and Enterprise customers, all effective Wednesday. For developers who’ve been hitting ceilings on long coding sessions or high-volume API calls, that’s a concrete capacity bump, not a roadmap promise.

What Colossus 1 Actually Delivers

Colossus 1 is not a modest facility. At 220,000-plus GPUs, it ranks among the largest single-site AI training clusters ever assembled. For context, OpenAI’s reported training runs for GPT-4 used somewhere in the range of 10,000 to 25,000 GPUs (estimates vary because OpenAI doesn’t disclose exact figures). Anthropic is now claiming exclusive access to nearly ten times that capacity at a single location.

The “exclusive” framing matters. Anthropic isn’t sharing this capacity with other tenants. It’s using all of it. That suggests either a training push for a next-generation Claude model, a massive inference scaling effort to handle growing API demand, or both. Anthropic hasn’t specified the split, but the immediate subscriber-facing changes (doubled rate limits, higher API caps) indicate at least some of the capacity is going straight into serving production workloads.

Compute is the lifeblood of large-language-model performance. More GPUs mean faster training cycles, larger model parameters, and the ability to serve more simultaneous users without degrading response times. For Anthropic, which has been playing catch-up to OpenAI in some commercial metrics while leading in safety research, this is a capacity equalizer.

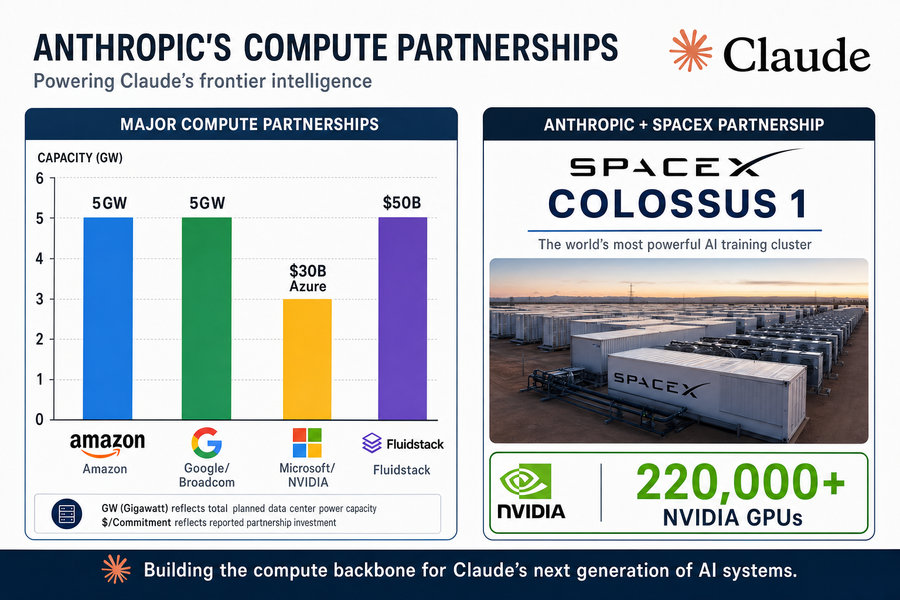

Anthropic’s Compute Stack Now Exceeds 10 Gigawatts Committed

The Colossus 1 deal isn’t a one-off. It slots into a compute acquisition strategy that has Anthropic accumulating partnerships at a pace that would have seemed absurd three years ago.

Here’s what the company has stacked up:

- An agreement with Amazon covering up to 5 gigawatts of capacity, including nearly 1 gigawatt of new capacity coming online by year-end.

- A 5 gigawatt deal with Google and Broadcom scheduled to come online in 2027.

- A Microsoft-NVIDIA strategic partnership covering $30 billion of Azure capacity.

- A $50 billion U.S. AI infrastructure investment with Fluidstack.

Adding those numbers together (and this is rough, because GPU counts per gigawatt vary by chip generation and cooling efficiency), Anthropic is positioning itself as one of the largest compute consumers on the planet. The SpaceX agreement doesn’t come with a disclosed power figure, but 220,000 H100-class GPUs would draw somewhere in the neighborhood of 150 to 180 megawatts at full load, depending on cooling overhead.

Why does a company that started as a safety-focused research lab need this much silicon? Because the AI infrastructure race has become an arms race. OpenAI, Google DeepMind, and xAI are all scaling aggressively. If Anthropic wants Claude to remain competitive on capability benchmarks while maintaining its safety edge, it needs the hardware to run experiments at frontier scale.

SpaceX’s AI Pivot and the IPO Narrative

SpaceX filed confidentially with the SEC on April 1 for an IPO that could value the company between $1.75 trillion and $2 trillion. The public S-1 filing is expected by late May, with the investor roadshow set for the week of June 8.

Until now, SpaceX’s revenue story has centered on two businesses: launch services (sending satellites and crew to orbit) and Starlink (satellite internet). Both are capital-intensive, hardware-heavy operations with long payback periods. Starlink in particular has faced questions about unit economics and subscriber churn in some markets.

Adding “AI infrastructure provider” to the narrative changes the investor pitch. Data center colocation is a high-margin, recurring-revenue business. If SpaceX can credibly claim that major AI labs are paying for access to its GPU clusters, it diversifies the revenue base away from launch-manifest volatility and Starlink’s subscriber math.

The Anthropic deal lands in the S-1 disclosure window. Investors will see a named, credible customer with a multi-year track record and billions in prior funding. That’s more persuasive than a generic “AI infrastructure” bullet point on a slide deck.

There’s also a subtle competitive angle here. Elon Musk’s own AI venture, xAI, competes directly with Anthropic. By selling compute to a rival, SpaceX is effectively monetizing both sides of the AI race. Whether that creates awkward boardroom dynamics down the line is anyone’s guess, but for now, it’s a rational capital allocation move: xAI gets access to Musk’s network and capital, SpaceX gets Anthropic’s cash.

Orbital Compute Is the Longer-Term Wildcard

Buried in the announcement is a phrase that could become more significant over time: Anthropic “flagged interest in partnering with SpaceX on orbital AI compute capacity.”

Orbital compute sounds like science fiction, but the physics are straightforward. Satellites in low Earth orbit can run electronics, and power is available via solar panels. The constraint has always been cooling (no air in space) and bandwidth (getting data up and down). Starlink’s mesh architecture partially addresses the bandwidth problem, and passive radiator cooling systems have improved.

Why would an AI lab care about orbital compute? A few reasons:

- Latency arbitrage. For certain applications (high-frequency trading signals, global edge inference), reducing the number of ground hops can shave milliseconds.

- Jurisdictional neutrality. Data processed in orbit isn’t clearly “in” any country’s jurisdiction, which could matter for privacy-sensitive workloads.

- Resilience. Orbital infrastructure is harder to disrupt with terrestrial events (natural disasters, grid failures, physical attacks).

None of these are pressing needs for Claude today. But Anthropic flagging interest signals that both companies are thinking about where AI infrastructure goes after the current data-center buildout saturates.

What This Means for the Broader AI Compute Market

If you’re tracking AI infrastructure as an investment theme (and crypto investors increasingly are, given the overlap with decentralized compute networks), the Anthropic-SpaceX deal is a data point worth filing.

First, it confirms that hyperscaler capacity isn’t enough. Anthropic already has agreements with Amazon, Google, and Microsoft. Yet it’s still signing deals with SpaceX, Fluidstack, and others. Demand for frontier-AI compute is outstripping what even the largest cloud providers can supply on reasonable timelines.

Second, it validates non-hyperscaler entrants. SpaceX isn’t traditionally a data center company. Neither is Fluidstack. The fact that serious AI labs are signing with them suggests the market is big enough for new players, and that differentiated offerings (SpaceX’s power access, Fluidstack’s distributed architecture) can win share.

For decentralized compute projects like Render or Akash, this is both a validation and a competitive concern. The validation: demand for compute is real and growing. The concern: well-funded centralized players are scaling faster and can offer reliability guarantees that decentralized networks still struggle to match.

Anthropic isn’t using decentralized compute for frontier training. But the overflow market (inference, fine-tuning, experimentation) might be where decentralized networks find their niche, serving the long tail of AI developers who can’t get allocations from the big players.

Rate-Limit Doubles and API Economics

For developers and enterprises already paying for Claude, the immediate question is whether the rate-limit increases justify current pricing or signal future price hikes.

Doubling Claude Code’s five-hour rate limits is meaningful for coding workflows. If you’ve been running into caps during extended debugging sessions or multi-file refactoring, that ceiling just got higher. Anthropic didn’t announce a price increase alongside the capacity bump, which suggests it’s absorbing the marginal cost (for now) to drive adoption.

Raising Claude Opus API rate limits is more directly revenue-relevant. Opus is the flagship model, and API access is how enterprise customers integrate Claude into production systems. Higher rate limits mean enterprises can route more traffic through Claude without provisioning fallback models or implementing client-side throttling.

The risk for Anthropic is that capacity scaling runs ahead of revenue scaling. GPUs are expensive. Even at bulk rates, 220,000 H100s represent billions of dollars in capital (either owned or leased). If subscriber growth doesn’t match capacity growth, margins compress.

But that’s a medium-term concern. The market signal today is that Anthropic is willing to spend to compete, and it has the partnerships to do so.

The SEC Filing Timeline

SpaceX’s IPO process is on a tight schedule. The company filed confidentially on April 1. SEC rules require the public S-1 to be filed at least 15 days before the roadshow begins, which means late May for a June 8 roadshow start.

Investors will get their first look at SpaceX’s financials, including whatever disclosure exists about the AI infrastructure business. The Anthropic deal will likely appear in either the revenue breakdown or the material contracts section. How SpaceX characterizes the economics (term length, pricing structure, exclusivity provisions) will shape analyst models.

If you’re following the IPO market more broadly, SpaceX’s listing is a bellwether for private-company valuations. A $2 trillion debut would make SpaceX one of the most valuable companies on Earth, larger than all but a handful of tech giants. It would also validate the patient-capital model that kept SpaceX private for over two decades.

Elon Musk’s recent confirmation of X Money’s April 2026 launch showed how Musk-adjacent announcements can move crypto markets, particularly Dogecoin, which surged 10% on that news. The SpaceX IPO could trigger similar sentiment trades if investors start pricing in Musk ecosystem synergies.

Second-Order Effects Worth Watching

A few implications aren’t in the press release but follow logically:

Power competition intensifies. A 220,000-GPU cluster draws serious power. SpaceX presumably has access to favorable power contracts (possibly tied to Starbase operations in Texas). Other AI infrastructure players without similar access will face cost disadvantages.

NVIDIA’s position strengthens further. Every one of these deals runs on NVIDIA silicon. AMD, Intel, and custom-chip startups are still fighting for market share, but Colossus 1 is another NVIDIA install base that will need upgrades, maintenance, and eventually next-generation chips.

Anthropic’s independence becomes a board-level question. With Amazon, Google, Microsoft, and now SpaceX all holding significant commercial relationships, Anthropic is deeply embedded in multiple ecosystems. That’s good for access but potentially complicated for governance.

Competitor response. OpenAI, xAI, and Google DeepMind will note the capacity expansion. Expect announcements of their own in the coming weeks, either new partnerships or capex commitments, as the compute arms race continues.

The AI infrastructure buildout is reminiscent of the telecom fiber boom of the late 1990s, with a crucial difference: the demand is real and measurable today. AI labs are hitting capacity constraints and paying premium prices to solve them. Whether that demand curve flattens in two years or five years remains to be seen, but right now, compute supply is the bottleneck.

Anthropic’s bet is that securing capacity today, even at high cost, is cheaper than being capacity-constrained when the next training run matters. SpaceX’s bet is that AI infrastructure is a durable revenue line worth highlighting to public-market investors. Both bets could pay off. Or the AI winter crowd could be vindicated. Either way, the capital is deployed, the GPUs are racked, and Claude just got faster.

For anyone building on or competing with Claude, the message is clear: Anthropic isn’t slowing down.

Related Reading

Source Material

Note: nothing written here is a trade signal. Price movement discussed above is history, not a forecast. Verify anything you plan to act on.